The fastest-growing security story of the year is simple and brutal: a voice that sounds exactly like your boss, a perfect invoice, a midnight rush. This is the AI scam 2026 playbook, and it wins by compressing trust and time into a single click.

1) The 2026 spike: cheap models, stolen context, zero friction

Two forces made AI scams explode. First, high-quality models got cheap and easy to run. Second, thieves now gather precise context in minutes — signatures, meeting notes, voicemail samples, vendor names, even calendar screenshots. Together they power AI scams that look and sound like us. Deepfake audio turns a brief clip into a persuasive command. Text models draft flawless messages in your tone. Image tools generate believable IDs and payslips. The result: scam emails and calls that bypass our usual alarms because they borrow our own language and timing.

AI phishing emails are now tuned to your exact workflow. They mirror last quarter’s invoice template, echo yesterday’s Slack phrasing, land at the moment you expect a payment reminder, and reference projects scraped from public docs. The enemy stopped guessing and started imitating.

2) The big three: voice, invoices, and jobs

Deepfake voice scam. A number pops up that looks right. The voice is right too — pacing, filler words, urgency. A short scene: an operations lead walks to the kitchen with her phone at 7:12 a.m. The caller, sounding like her CEO, says a partner is waiting for a bridge payment, asks her to override the usual approvals and send a wire now. The request is small enough to feel reasonable, urgent enough to skip checks. The call ends with, “I’ll be in a meeting; text me when it’s done.” The actor hangs up and blocks replies. Minutes later, the money is gone.

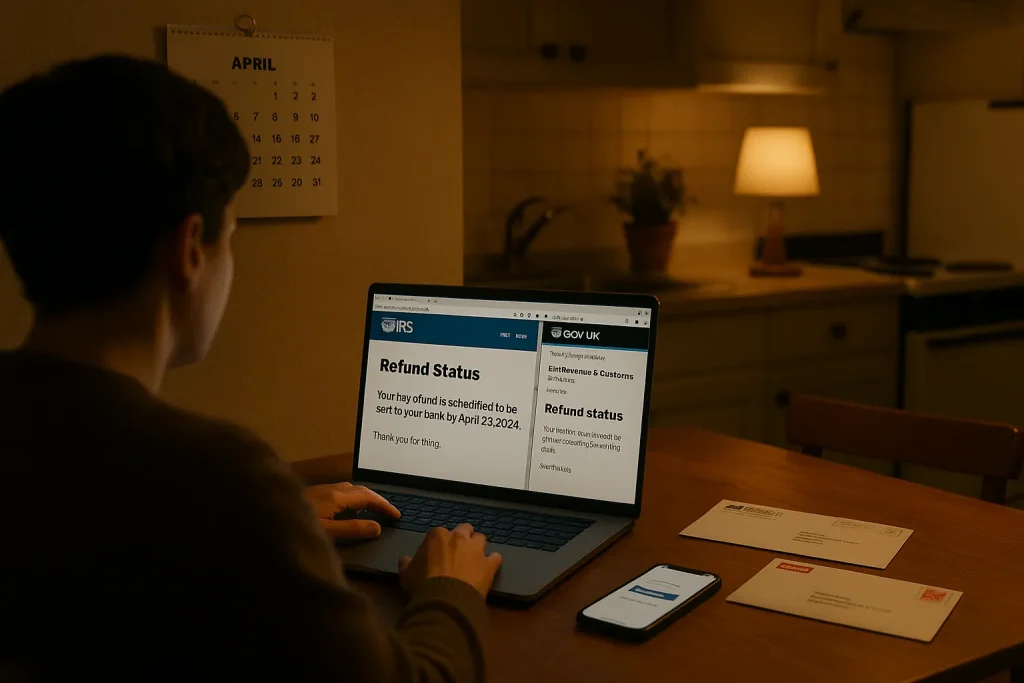

Fake invoice scam. Attackers register a domain one character off your vendor’s, clone the invoice layout, and reply inside an existing thread they’ve quietly forwarded themselves. They swap banking details and push a friendly “updated remit notice.” The deception is linguistic more than visual: it feels like your vendor’s voice. Refund angles ride the same rails — “updated tax refund,” “amended payout method,” “correction to last week’s credit.” Instead of asking for your password, they ask for your payment.

Job and recruiter lures. AI now writes breezy, on-brand recruiter notes and generates brief screening calls using cloned voices. The goal: harvest identity docs, collect small “equipment fees,” or redirect you to malware-laced onboarding portals. When cash is tight, slick opportunities multiply; resisting them means verifying, not dreaming. If you need options that don’t start with a fee, explore real side hustles in 2026 before engaging with unsolicited offers that feel too polished to question.

3) Phishing written by AI — and the 5‑second test

Today’s scams don’t look broken. Grammar is clean. Logos are crisp. Thread context is spot‑on. What gives them away are tiny seams in timing, control, and verification. If you want how to spot AI scams fast, use the quick test below and then slow the exchange down on your terms.

- Source: Is this channel and sender identity under your control? If it arrived by new number, new domain, or a forwarded thread, freeze.

- Money/credentials: Is there a request to move funds, buy codes, or share MFA/ID? If yes, verification moves off the current thread.

- Timing: Is there an invented deadline, after‑hours pressure, or a can’t‑talk‑now excuse? Urgency is a tell.

- Out‑of‑band check: Can you confirm via a known number in your contacts, a fresh calendar invite, or your vendor portal? If not, you don’t proceed.

- Mismatch: One character off in the domain, odd currency, or slightly wrong signature format means stop. No exceptions.

This five‑point scan catches most AI scams and scam emails in seconds because it looks for power plays, not typos. When the message involves payouts, add a second person to approvals automatically. Always. For refund lures, verify only inside your official account — you can track your 2026 tax refund safely without clicking any email link.

4) Fast cases, fast lessons

A regional nonprofit received a warm, familiar note from a frequent grantee asking to update bank details before a deadline. The attached letterhead looked perfect. The deputy director paused only because the time zone in the signature didn’t match prior emails. A quick call to the old number saved a five‑figure transfer. Lesson: when money flows to a new destination, you verify through a channel you choose.

A freelancer passed a video interview with a well‑known company and got a same‑day offer. The contract had perfect branding; the portal asked for a small “equipment reimbursement” to be repaid on first paycheck. He asked the recruiter to join a live video from a corporate domain and to send a calendar invite from the company’s system. The thread went silent. Lesson: no legitimate employer requires upfront payments; real HR will confirm via official systems.

A controller received a dawn call from a voice that matched her CEO and a message thread that mirrored a real vendor. The ask: split a larger invoice into two wires to a new account to “avoid bank holds.” She used the 5‑second test, declined in the moment, and called the CEO’s known number. The CEO was on a plane with phone off. Lesson: the right refusal is procedural, not personal. Your policy shields your people.

These are the kinds of examples of AI email scams 2026 responders see across banks, incident hotlines, and corporate security teams. The pattern repeats: perfect surface, pressure underneath. When in doubt, apply the checklist, then escalate slowly.

5) If you’ve been targeted — or hit — act by the clock

For wires and ACH: call your bank’s fraud line immediately and request a recall or a hold. Name the transfer ID, amount, and beneficiary account. Then file a report with your national cybercrime portal and local law enforcement. If workplace funds are involved, notify your security and finance leads at once so they can start vendor and payroll checks.

For cards and payment apps: freeze the card, dispute charges in‑app, and change your password and PIN. Enable bank‑level alerts for new payees and large transactions. If credentials may be exposed, rotate passwords, revoke app tokens, and reset MFA seeds. That’s what to do if scammed by AI in practical terms: stop the flow, preserve evidence, and force resets where the attacker might return.

For voice deepfakes: save call logs and voicemails and document the spoofed number. Share samples with your IT/security team; they can flag lookalike domains, update email rules, and warn vendors. For emails: forward full headers to your security contact so they can sinkhole domains and teach your filters to spot similar sends.

Reporting matters. It creates a trail that banks and platforms can act on. When in doubt, report AI scam to authorities and include transaction IDs, domains, phone numbers, and exact timestamps. The earlier the signal, the better the odds of interruption.

6) Make fraud expensive: safeguards for people and teams

Individuals and freelancers. Default to two‑person money moves for any wire, crypto, or large Zelle/Venmo. Lock vendor and payout changes behind an out‑of‑band call using a number saved months ago, not pulled from an email signature. Keep a short personal runbook: known bank numbers, employer numbers, and support channels you will use to verify. On phones, enable silence‑unknown‑callers and label spam; on mail, turn on link warnings and mailbox protections. If your device adds new AI features, learn how they affect call screening and authentication — the new iPhone 2026 AI features rumored for call summaries and live voicemail can help you pause, not rush.

Vendors and invoices. Publish your invoice change policy on your site: “We never change bank details by email. Call this number to verify.” Embed this in every invoice footer. In your accounting system, lock payee edits to a separate role with audit trails. Require a live callback to a known contact before approving changes. In email, banner external mail and block auto‑forwarding rules that hide thread splits.

Executives and approvals. Create a “no exceptions” doctrine in writing: nobody requests emergency payments over chat or text. If a leader must make an urgent exception, they trigger a pre‑agreed code phrase and a calendar event from an official domain — and still, finance calls back on file. Train leaders to embrace being slowed down. It’s not about trust; it’s about repeatable trust.

Tools that help without drama. DMARC and SPF reduce lookalike spoofing. File‑integrity checks catch swapped PDFs. Browser isolation and attachment sandboxes neuter one‑click malware. On phones, call‑back buttons tied to your own contacts list, not the incoming ID, eliminate spoofed numbers. For deepfake voice scam defense, short verification phrases over a known line (“last two letters of today’s codeword, please”) end most actor runs instantly.

FAQ

What are the most common AI email scams in 2026?

The dominant patterns are fake invoice scam threads, AI phishing emails that copy your vendor’s tone, and recruiter lures tied to document or fee requests. Deepfake voice scam calls often arrive alongside an email to push urgency and bypass approvals.

How can I spot a deepfake voice scam in 5 seconds?

Treat urgency plus money as a stop sign. Hang up, then call back using a number in your contacts, not the recent call. Ask for a pre‑agreed code or verify via a calendar invite from an official domain. Small audio glitches matter less than control of the channel.

What should I do if I receive a fake invoice?

Stop the thread, refuse to click links, and verify payee changes by calling a known number on file. Check domain spelling and prior bank details. In your accounting system, lock edits and require two‑person approval before any new beneficiary is paid.

Who should I report an AI scam to and how?

Contact your bank’s fraud team first with transaction IDs, then file with your national cybercrime portal and local police. Include domains, emails, phone numbers, and timestamps. Early reporting improves the chance of stopping or recovering funds.

Summary: Make time your ally. Verify off‑thread, force two‑person approvals, and memorialize simple rules your team can follow at speed. Most AI scams lose power when you slow them down.